Curveball or game changer? ChatGPT, AI tools under watch on Canadian campuses

When ChatGPT emerged last fall, reaction to the new artificial intelligence (AI) tool ranged from wonder and curiosity to consternation and panic — including among school officials already concerned with cheating and academic misconduct in our online age.

Now, about two months later, a wave of professors and academic integrity experts are sharing more measured reactions to developer OpenAI's ChatGPT bot, which can quickly spit out human-like writing, computer code and more based on training from billions of samples from the web.

They're checking out the bot themselves, raising it with colleagues and even bringing it into classrooms. Some call this a teachable moment: both for students and for professors, as a reminder to regularly re-evaluate new technologies and how they assess student learning.

For academic colleagues who "do a lot of thinking about the best way to teach and to help students learn in a digitally mediated space," there's no panic about ChatGPT since it's simply the latest in a progression of tech already on their radar, says Luke Stark, a Western University assistant professor of information and media studies.

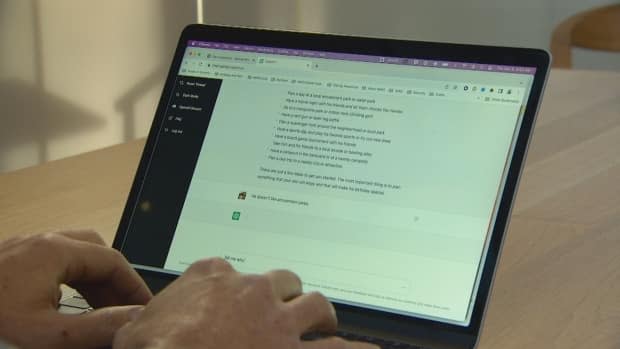

WATCH | Take a look at how ChatGPT works:

"ChatGPT is just one of many technological curveballs that higher education has had to deal with over the last few years," noted Stark, who researches the history, ethics and social implications of AI, machine learning and similar technologies.

"I see it as an opportunity for all of us to be aware of the new things that we can do with technology and also the ways that this will impact our students."

When it opened to the public last fall, Stark raised ChatGPT in his classes and it's a move he encourages peers to do as well.

"Do a little research yourself and then bring it up in class. Make it clear to the folks in front of you that you know about these systems… you know that somebody might be using them and make it a teachable moment about the way that technology can shape discourse, language, writing," he said.

"The key thing is to be engaged [and] honest with your students, to remind them that they want to be here. They want to be learning."

Liane Gabora also told her classes about ChatGPT last fall and, after diving right into testing it alongside her students, the psychology professor at the University of British Columbia (UBC) Okanagan campus admits her initial feelings were a mix of amazement and concern.

After tinkering, getting used to and discovering some limits of the bot, however, Gabora is now exploring what new opportunities it may provide when used for engaging assignments that encourage students' creativity and critical thinking.

"They're having fun with [ChatGPT assignments]. They're playing with it. They're exploring it… They're testing the boundary conditions. They're trying all these jail-breaking techniques for getting out of the kind of default restraints," she explained.

Gabora did first preface to her students that UBC administration is fully aware of ChatGPT — and new software that detects AI-generated essays as well, she pointed out. However, she thinks the way forward is to incorporate new tools like this.

"We can't go backwards, right? It's here with us and it's going to stay."

Institutions monitoring the situation

UBC is taking an "educative approach" in terms of AI tools and services, according to Simon Bates, the school's current vice-provost and associate vice-president of teaching and learning.

Advisory groups with both faculty and student representation are considering "how we can do more on the educative side of academic integrity: to look at how course designs might be used to support academic integrity, how better to define and communicate to students what is and is not acceptable in their various course contexts," he said in a statement.

WATCH | Students share their thoughts about ChatGPT and AI tools for assignments:

The University of Toronto, which at more than 88,000 students across three campuses is Canada's largest university in terms of enrolment, is taking a similar approach.

"We routinely monitor and assess the development of technological tools that might affect learning, teaching and assessment, and are paying close attention to ChatGPT and other emerging technologies," Susan McCahan, vice-provost of academic programs and innovations in undergraduate education, said in a statement.

U of T has formed groups to keep tabs on generative AI tech, provide guidance to instructors on assessment, all while profs and students "are also discussing these technologies, which is crucial to ensure we develop shared understandings and approaches," she said.

Bob Mann, manager of discipline and appeals at Dalhousie University in Halifax, hasn't yet run into a case of anyone using ChatGPT for an assignment — he thinks we're still in a "curiosity and interest" stage — but he feels the school's academic integrity policies are clear.

"We aren't just collecting assignments no matter where they came from. We want them to come from you," he said.

"[It] applies equally to a situation whether you're getting a cousin or a friend to do some work for you or you're borrowing material from the internet or you're getting an artificial intelligence or piece of technology to do it for you."

Mann credits computer science colleagues for flagging the potential for these new AI tools some time ago and feels confident that the "gut instinct" alarms that already sound off for professors and teaching assistants — a submission that greatly exceeds what a student has previously turned in, for instance — will continue to be valuable.

"At the very least, our process is such that we can provide a shot across the bow to a student … to say 'Listen, you're on our radar. You're handing things in and we're reading them and going 'Something's a little off about this.'"

Polarized reactions

Whereas certain colleagues felt the idea of exploring AI tools and ethical use of them in a higher education context is "a little bit like Star Trek," it's been a topic academic integrity researcher Sarah Elaine Eaton has been fascinated with for some time.

The associate professor in University of Calgary's school of education is currently working on a study about AI tools, having first applied for a grant to do so back in 2020.

"I have people messaging me on social media, [from those] saying 'This is plagiarism and it needs to be stopped' to 'This is the best creative disruption in our lifetime,'" she noted.

"Right now I see attitudes kind of being a little bit polarized, so I'm taking a little bit of a middle-of-the-road [approach] and just trying to understand how we can use this without going to extremes."

Eaton believes artificial intelligence will play a growing, game-changing role in society, but she doesn't think it can ever supplant the human touch. "The human imagination isn't going anywhere. Creativity isn't going anywhere" she said.

The explosion of interest and real-world use ChatGPT has seen since November "is a critical part of developing and deploying capable, safe AI systems," a spokesperson for OpenAI said in a statement to CBC News.

"We don't want ChatGPT to be used for misleading purposes in schools or anywhere else, so we're already developing mitigations to help anyone identify text generated by that system. We look forward to working with educators on useful solutions and other ways to help teachers and students benefit from artificial intelligence."