Apple AirPods Pro will get head gestures and better calling with iOS 18

Along with a slew of new features for iOS 18, Apple’s Worldwide Developer Conference keynote has given us a sneak peek at how the AirPods Pro will evolve come the fall.

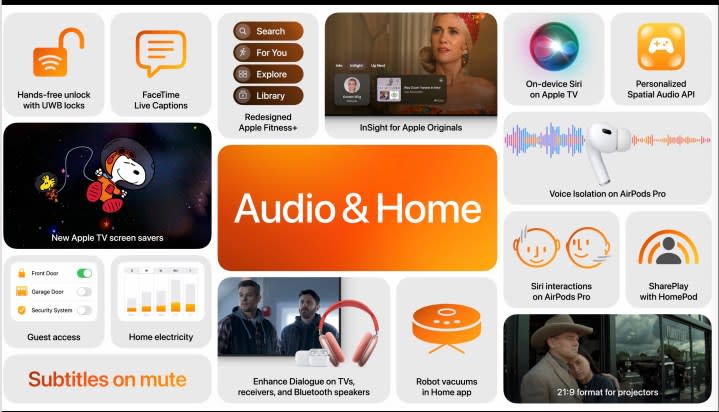

One of the big changes is how you can respond to Siri’s verbal options. For instance, when a call comes in and Siri asks if you’d like to accept, you can nod your head to do so or shake it to decline.

The calls themselves will also be getting a boost — a new voice isolation algorithm is said to improve the AirPods’ ability to distinguish your voice from background sounds, which should make it a lot easier for your callers to hear you, even when you’re surrounded by loud noises. The AirPods Pro are already among the best wireless earbuds for this kind of thing, so it will be interesting to see how much better iOS 18 will make them.

Apple has been a strong proponent of spatial audio for years, including giving AirPods Pro owners the ability to set up personalized spatial audio — an algorithmic way make the immersiveness of spatial audio even more realistic by using scans of your ears and head to customize the sound.

Today, personalized spatial audio only works on select content like Dolby Atmos Music from Apple Music, but with iOS 18, Apple is releasing an application programming interface (API) for personalized spatial audio that will let third-party developers like game studios take advantage of the feature in a variety of apps.

Apple also has a new dialogue enhancement feature coming for the Apple TV 4K, which will work with the AirPods Pro and a variety of other Bluetooth devices that are connected to Apple’s streaming media player.