Google research shows the fast rise of AI-generated misinformation

From fake images of war to celebrity hoaxes, artificial intelligence technology has spawned new forms of reality-warping misinformation online. New analysis co-authored by Google researchers shows just how quickly the problem has grown.

The research, co-authored by researchers from Google, Duke University and several fact-checking and media organizations, was published in a preprint last week. The paper introduces a massive new dataset of misinformation going back to 1995 that was fact-checked by websites like Snopes.

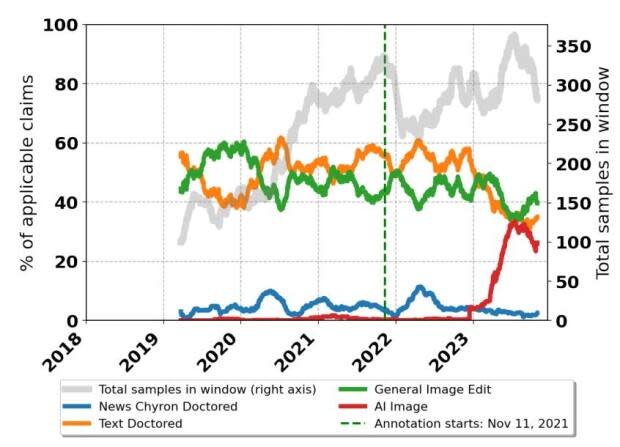

According to the researchers, the data reveals that AI-generated images have quickly risen in prominence, becoming nearly as popular as more traditional forms of manipulation.

The work was first reported by 404 Media after being spotted by the Faked Up newsletter, and it clearly shows that "AI-generated images made up a minute proportion of content manipulations overall until early last year," the researchers wrote.

Last year saw the release of new AI image-generation tools by major players in tech, including OpenAI, Microsoft and Google itself. Now, AI-generated misinformation is "nearly as common as text and general content manipulations," the paper said.

The researchers note that the uptick in fact-checking AI images coincided with a general wave of AI hype, which may have led websites to focus on the technology. The dataset shows that fact-checking AI has slowed down in recent months, with traditional text and image manipulation seeing an increase.

The study looked at other forms of media, too, and found that video hoaxes now make up roughly 60 per cent of all fact-checked claims that include media.

That doesn't mean AI-generated misinformation has slowed down, said Sasha Luccioni, a leading AI ethics researcher at machine learning platform Hugging Face.

"Personally, I feel like this is because there are so many [examples of AI misinformation] that it's hard to keep track!" Luccioni said in an email. "I see them regularly myself, even outside of social media, in advertising, for instance."

AI has been used to generate fake images of real people, with concerning effects. For example, fake nude images of Taylor Swift circulated earlier this year. 404 Media reported that the tool used to create the images was Microsoft's AI-generation software, which it licenses from ChatGPT maker OpenAI — prompting the tech giant to close a loophole allowing the images to be generated.

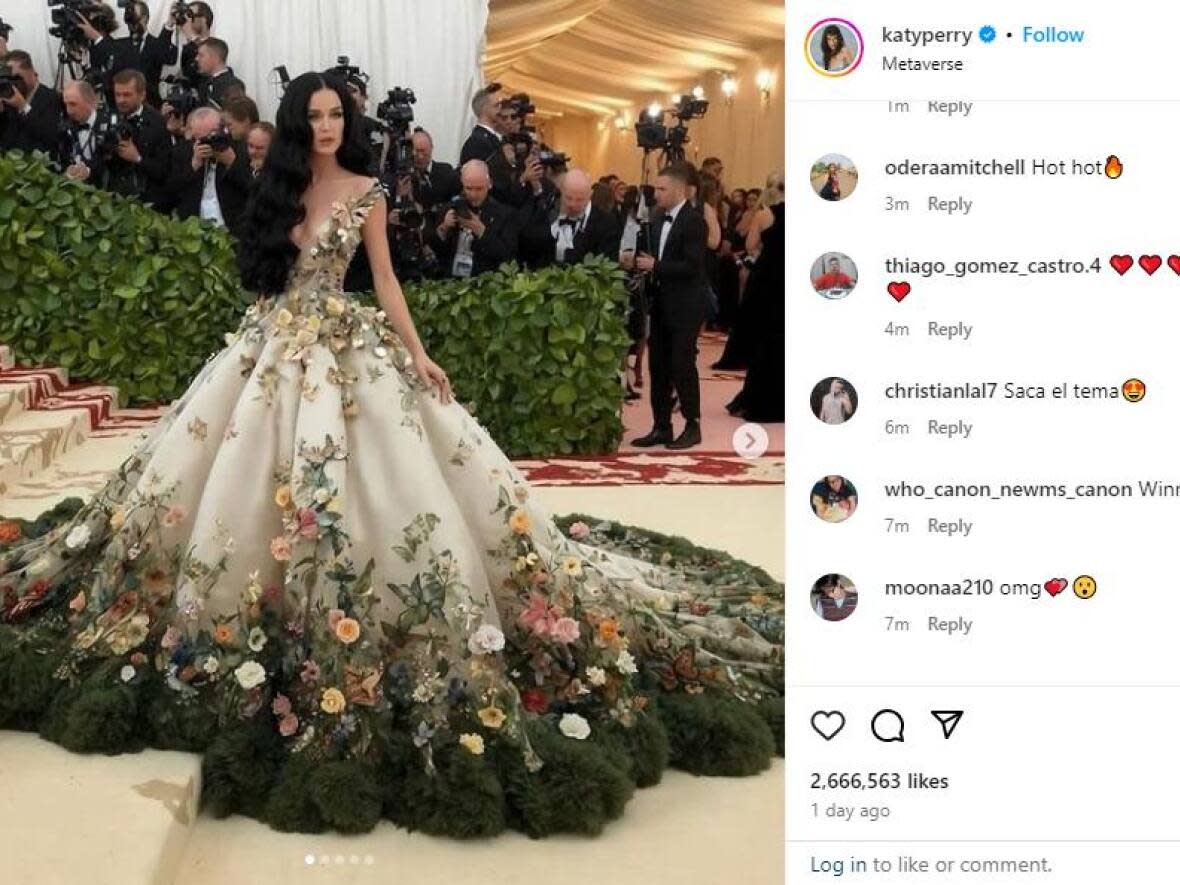

The technology has also fooled people in more innocuous ways. Recent fake photos showing Katy Perry attending the Met Gala in New York — in reality, she never did — fooled observers on social media and even the star's own parents.

The rise of AI has caused headaches for social media companies and Google itself. Fake celebrity images have been featured prominently in Google image search results in the past, thanks to SEO-driven content farms. Using AI to manipulate search results is against Google's policies.

WATCH | Taylor Swift deepfakes taken offline. It's not so easy for regular people:

Google spokespeople were not immediately available for comment. Previously, a spokesperson told technology news outlet Motherboard that "when we find instances where low-quality content is ranking highly, we build scalable solutions that improve the results not for just one search, but for a range of queries."

To deal with the problem of AI fakes, Google has launched such initiatives as digital watermarking, which flags AI-generated images as fake with a mark that is invisible to the human eye. The company, along with Microsoft, Intel and Adobe, is also exploring giving creators the option to add a visible watermark to AI-generated images.

"I think if Big Tech companies collaborated on a standard of AI watermarks, that would definitely help the field as a whole at this point," Luccioni said.