The Gender Bias Behind Voice Assistants

A typical after-work scene at my house goes something like this. “Alexa,” I say. She chimes, then lights up. “Play the new Jenny Lewis album.”

“Playing Jerry Lee Lewis Essentials from Apple Music.”

“Alexa,” I repeat, louder and more aggressively this time. “Play…”

And so on.

My husband says the persistent disconnect between me and Alexa is my fault—I need to pause more, speak more clearly, and maybe throw in a “please” now and then. But not long after she moved in—a necessary sidekick, I was told, to the new sound system he had installed—I started getting the feeling she preferred Bob over me, no matter how polite I was (although often I wasn’t). Once she started piping up every time someone in the house called my name ("Alyssa!"), I was convinced she was truly out to get me.

Was I projecting? Culturally programmed, perhaps, to be biased against other women? Or, in fact, was she?

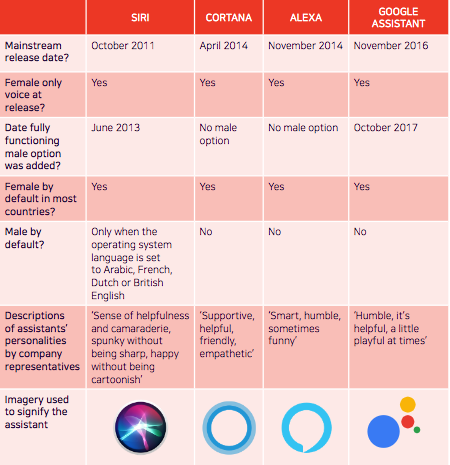

Since virtual assistants came on the scene with Apple’s introduction of Siri in 2011, people have debated whether their undeniably female qualities render them retro or postfeminist, and whether their presence in our lives may reflect—as well as influence—how we relate with and respond to women in real life. Are they modern-day servants or are they, in fact, badass ladybosses running the house? Although the tech companies who make them claim the assistants are not assigned a gender—Apple refers to Siri as “it” even as most of the rest of us default to the she/her pronouns—her name and voice and those of her contemporaries, like Amazon’s Alexa and Microsoft’s Cortana, are undeniably female-leaning.

And their collective femininity is not without consequence: In May, UNESCO released a 145-page report about gender bias in the field of artificial intelligence, arguing that voice assistants are inflaming gender stereotypes and teaching sexism by creating a model of “docile and eager-to-please helpers,” programmed to be submissive and accept verbal abuse. Indeed, tell Siri that she’s “an idiot” and she responds with the mild-mannered, “That doesn’t sound good.” Offer an apology, and she’ll easily accept. (Alexa just lights up and dings when you hurl a slur at her—she’s heard you, but chooses not to respond, which may not be any better.)

If you’re thinking it sounds pretty backward that an industry pushing the cutting edge of technology seems to rely on models of communication rooted in gendered stereotypes, you’re right. The UNESCO report cites several reasons for this reliance. The first is that women are underrepresented within the tech industry, resulting in largely male-dominated teams building these technologies. The second is that gender stereotypes lead those (men) developing the programs to assume that users find female voices more appealing. UNESCO argues that if people do, in fact, prefer the female-sounding helper, it’s mainly because we’ve been conditioned to feel more comfortable making requests of women. But by not questioning this conditioning, says Miriam Vogel, executive director of Equal AI, a nonprofit formed to address and reduce unconscious bias in the field of artificial intelligence, “we’re teaching our next generation to double down on those stereotypes.”

What’s more, an ever-increasing number of people are calling on Siri, et al., to perform “emotional labor,” venting to them when they’re feeling lonely or angry and, by the way, often using language they might be less inclined to use if, say, Siri had the voice of The Rock. My 15-year-old stepson certainly talks to Alexa differently than he would if she were his father—but, to be honest, so do I. Sure, she’s “just a machine.” But “if we’re socializing people to treat Alexa like she’s there to take whatever we dish at her, we’re normalizing this sort of interaction,” says Jessa Lingel, Ph.D., an assistant professor of communication at the University of Pennsylvania who studies digital culture. “And even if that’s just normalizing our relationship to AI, as we come to rely on AI more, that could be problematic. It could reinforce the long-held dynamic that men’s voices are public and authoritative and women’s voices are meant to serve privately and be shut down.”

Research, meanwhile, also confirms that the miscommunication between Alexa and me isn’t only in my head. Vogel says the data shows that these devices are less responsive to certain tones inherent in the female voice. Over time, this may perpetuate the idea that women have less power than men, as well as increase women’s inherent frustration with other women and with themselves. Vogel has seen this dynamic play out at home when her two young daughters try to communicate with Alexa. “When she doesn’t understand them, they get frustrated that they’re not being heard,” she says. “They get angry, they start to yell. But they’re also getting naturalized to the reality that their voices, as females, are not as effective.”

It’s up to tech to right the course (and, of course, to consumers to insist they do). Earlier this year, a group of linguists, technologists, and sound designers from the U.S. and Europe released a nonbinary digital voice called Q. “The upcoming generation will give a great deal of attachment to this technology,” says Julie Carpenter, Ph.D., a research fellow at CalPoly and expert in human behavior with emerging technologies, who worked on Q. “If their voice assistant breaks for a weekend, they’re sad. The voices they’re immersed in do matter. People can interpret a nonbinary voice however they want—as female or male or neither. It becomes about choice rather than conditioning.”

Vogel likes Q but thinks the fix isn’t quite that simple, because un-gendering AI is about perfecting the persona as much as the voice. “Once you’re asking consumers to view an assistant as a replacement to a human, as part of the family, you have a responsibility to be thoughtful not only about how it sounds but how it interacts,” she says. “Electronic assistance is not going to destroy humanity, and using it as it is now doesn’t mean all our kids will treat others with less respect. But I do think a piece of the puzzle is looking under the hood at who’s making the tech, the intentions we’re programming into it, and how it will effect later AI systems. The long-term implications of gendered technology,” says Vogel, “are far-reaching.”

Originally Appeared on Architectural Digest