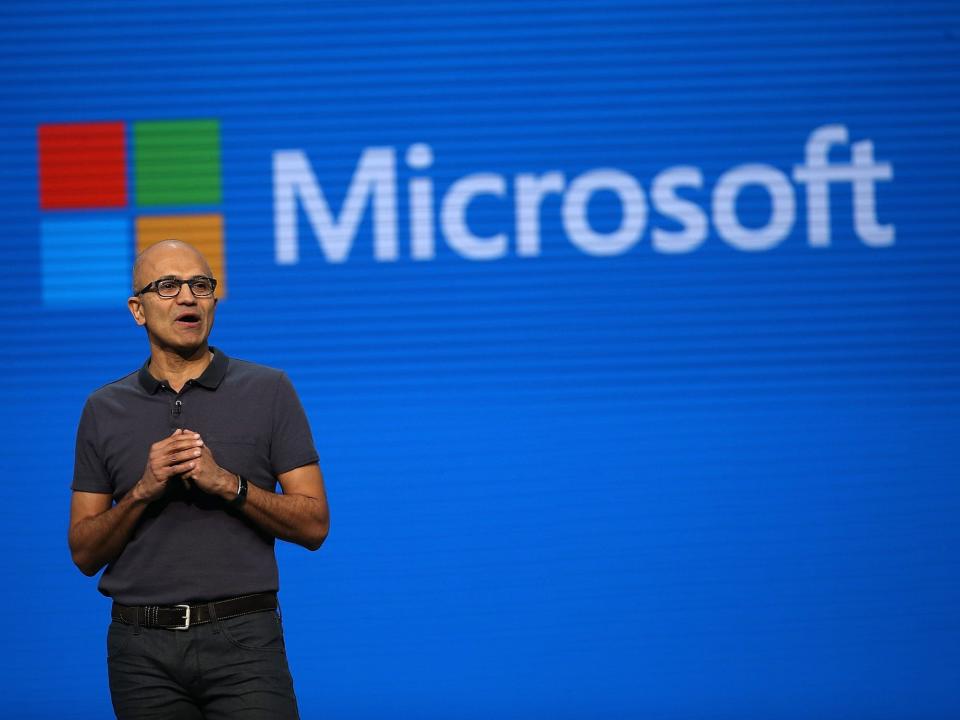

Microsoft CEO Satya Nadella talks AI, closing the Activision Blizzard deal, and his best business decision so far

Microsoft CEO Satya Nadella recently sat for an interview at Axel Springer's Berlin headquarters.

He talked about his career journey, Microsoft's partnership with OpenAI, and leadership, among other topics.

Nadella received the 2023 Axel Springer Award this year.

Mathias Döpfner, the CEO of Insider's parent company, Axel Springer, recently met with Microsoft CEO Satya Nadella for a wide-ranging interview.

The interview took place in Berlin at Axel Springer's headquarters, where Nadella was honored with the 2023 Axel Springer Award, which recognizes "outstanding personalities who demonstrate an exceptional talent for innovation, create and transform markets, shape culture, and also face their social responsibility."

You can read a transcript of Döpfner's conversation with Nadella below. The interview has been lightly edited for clarity.

Satya, the world knows you're a big cricket fan. Cricket is a bit of a mystery for Germans. But we do realize it's a great time for cricket just now. The cricket world cup is taking place in India, and there's talk of the Olympic Games 2036 being in India, too. Which is very exciting for cricket fans because it's scheduled to become an Olympic discipline again in 2028. Could you tell us a little about personal fascination with the sport?

As an Indian and a South Asian, I think it's more than a religion. For all of us, it's what we grew up with.

What is it you have learned from cricket about leadership?

I remember one time we were playing a league match in the city I grew up in Hyderabad and there was a player from Australia playing in that league match. We were all sitting there admiring this guy and what he was doing on the field. And I distinctly remember my coach standing behind me and saying, "Hey, don't just admire from a distance, go and compete!" For me, that was a great way of saying: when you're on the field, how do you go in there and give it your all. Learn from your competition, but don't be in awe of your competitors.

Another incident I remember is a game where I was playing under one of the captains. One of our players was very good, but for some reason or another he was not happy with the decisions the captain was making on the field. And he was dropping these catches on purpose just to make a point. That really influenced me a lot: this one player brought the entire morale of the team down on purpose just because he was not happy about things. And I do think that, from time to time you get into situations in the workplace where you have one person who is not really bought in, and that's something you really have to act on. The situation where I learned the most was when, one day, I was having a very bad streak, and my captain took me off. He came in himself and got a breakthrough or a wicket. But then he gave the ball back to me and, interestingly, I went on to get a bunch of wickets in that match. I asked him why he did that, let me play again even though I was having a bad day. He said to me, "I need you for the rest of the season, so I didn't want to break your confidence." And this was high school cricket, and I thought, "My God, this guy is an enlightened captain." So, in some sense, if you're leading a team, you don't just want that team for one time, you need them for the duration. When I look back, there are lots of incidents that have given me a bunch of lessons to take away.

The way you became the CEO of Microsoft is quite a story in itself. You were typologically pretty much the anti-Steve Ballmer and not the favorite at the beginning. And you were quite a sharp contrast culturally. You became CEO as an "internal stranger."

Yeah, that's a great way of describing it actually. Having grown up at Microsoft, first during Bill's tenure, and then Steve's tenure, I don't think I ever got up in the morning and thought that someday Microsoft would have neither Bill nor Steve. And quite frankly, when Steve announced that he was going to leave, it was a real shock to me. I remember quite distinctly one of the board members asking me, "Do you want to be the CEO?" And I replied, "Only if you want me to be the CEO." I told this to Steve, because the board members were like, "You know, if you want to be the CEO, you really need to want to be the CEO." I said, "God, I've not thought about it, I don't know." Steve was very interesting. He said, "It's too late to change, so just be yourself and see what happens." In retrospect, I think the board did what they had to do in the succession process. They looked broadly on the outside, and they looked deep on the inside, and then they made their decision.

There is a story that you were asked what you would do if you found a child screaming in the street during an interview before joining Microsoft in 1992. And you just said you would call 911. Is that story true?

I remember this very distinctly because there were a lot of programming questions throughout the day. I was having to code all the time, and I was exhausted. Then this last guy's question was, "What would you do if you were crossing the road and a child fell down and was crying." And I said, "I'd go to the nearest phone booth — it was before cell phones — and I would call 911." He got up and said, "It's time for you to leave. When a child is crying, you pick them up and give them a hug and you need to have empathy." I was sure I'd never get a job.

Are you a very rational person?

I have developed more understanding and empathy over the years for the world and people around me. If you train and grow as an engineer, like I did, then you think your goal is to be more rational. But in reality, as Herbert Simon frames it, we are only rational to an extent. Therefore, I think there's no harm in being able to mix rationality with your own understanding and empathy for the people in the world around you. Life's experience teaches us that none of us can get by without the kindness of the people around us.

Were your parents very ambitious for you? One story has it that your father put a poster of Karl Marx in your room, because he wanted you to become a philosopher, whereas your mother put a poster of Lakshmi, the goddess of beauty and love and wealth.

Yes, that's right. Interestingly, both my parents were successful in their own way. My dad was a civil servant, and a little bit of a Marxist economist. And my mother was a professor of Sanskrit.

So, your father was a Marxist?

I would say, he was a left-of-center civil servant. One interesting thing he told me was that being too doctrinaire, too dogmatic, is a mistake. He was happy to go to Hayek or Marx, as long as it met his needs. He was practical in that sense. India was a newly independent country and, as a civil servant, he cared mostly about the development of the country. And that influenced me a lot. To get back to your question, yes, they were ambitious for me. They wanted me to have the room to learn and grow and not just follow what was happening. When I look back at it, as a middle-class kid growing up in India, they gave me a lot more room to develop my own interests, follow my own passions, and to develop my own point of view on topics, without being indoctrinated on anything.

Would you say you had a happy childhood altogether?

Oh, yes, absolutely.

Did you always feel loved, unconditionally loved?

Absolutely.

Do you think that unconditional love is an important factor for future personality development, because you feel that certainty?

I think a lot about that. If you have a loving environment at home, that gives you confidence to tackle the world and the challenges that it poses. It makes a huge difference. I do think that unconditional love and support are massive parts of our development. If you grow up in a home with parents who are invested in your success, not so much in the outcome, but more in giving you the best shots, then that's the greatest break you can have in life.

Last year your son passed away at the age of 26, having been born with cerebral palsy. To what degree has that also influenced you, your definition of family, of unconditional love and leadership style? Is any of that related?

Yes. You know, my son Zain's birth made my wife and I grow up very quickly. And quite frankly, it was one place where I learned a ton from my wife. I was 29, and she was 27, and if you had asked me the day before he was born what we were most worried about, it would have been my wife going back to work, where we could get childcare, and so on. Then he was born, and our life changed a great deal. Even though it was hard for me to accept, it was very clear that his life was going to be challenging. And so, we really needed to buckle up and help him as best as we could. For the longest time I suffered from it, "Why did this happen to me? And why is this now upsetting all the plans and ideas I had about my life?" Then I looked at my wife, and she was getting up in the morning, and she gave up her job as an architect, and she was going to every therapy possible throughout the Seattle area. She was completely dedicated.

I realized that nothing had happened to me, something happened to my son, and as a parent I needed to be there to support him. It took me years; it wasn't like an instant epiphany. But it was a process in which I grew a lot, I would say. And I know it changed me as a leader, and it changed me fundamentally as a human being.

Would you say that it made you more thankful, perhaps even more humble?

Yes, I think it just helped me grow my empathy, understanding that I was able to see the world through my son's eyes as opposed to my own. That, I think, started having a real impact on how I showed up in the workplace, how I showed up as a husband, how I showed up as a friend, a son, a manager, a colleague. It fundamentally changed me where I could relate to when someone was going through something. When somebody says something, I don't just look at it purely from my point of view but try to understand where they're coming from and what's happening in their life. And, quite frankly, the amount of flexibility and leeway given to me by some of the leaders I worked for when my son was young really influenced me. I was a nobody, just a first-level manager at the company, and a lot of what I experienced back then shaped what I wanted to be. It was a very defining moment.

Being a leader with a lot of empathy and determination to change Microsoft's culture from a rather rectangular and masculine into a more open and understanding one, and focusing more on soft skills rather than performance KPIs is one of your greatest successes and critical achievements as CEO. You grew the company valuation from a valuation of $400 billion to roughly $2.5 trillion, and analysts are optimistic it will grow further. What, in your mind, is the most important cultural decision you have made?

Empathy is often seen as a soft skill, but I actually think it's a hard one. And that has to do with design thinking. I've always thought of design thinking as the ability to meet unmet, unarticulated needs. And so, having that ability to absorb what is happening, and then conceive innovation, comes from, in some sense, having deep empathy for the jobs to be done. This is why I believe it's a very important – and perhaps the only sustainable – design and innovation skill for success. But, coming back to your question on culture: by the late 90s, Microsoft had achieved tremendous success. In fact, we became the company with the largest market cap. When that happened, people were walking around campus thinking they were God's gift to innovation. I like to frame this as us becoming know-it-alls. Later, I realized that this was not the case and that we needed a really important change from being know-it-alls to becoming learn-it-alls.

Luckily, I had read this book called "Mindset" by Carol Dweck a few years before I became CEO. My wife had introduced me to it in the context of my children's education. Carol Dweck talks about "growth mindset." In a school setting, you can really encourage kids to have a growth mindset by helping them learn from the mistakes they made. When they're not being know-it-alls, but learn-it-alls, then they are more successful. I felt like that also applies to CEOs and companies at large. So, we picked up on that cultural meme of the growth mindset.

What was your biggest mistake that you made in your professional life? And what did you learn from it?

I wish I could say there was one mistake, but I've made many, many mistakes. I would say that my biggest mistakes were probably all about people.

Not picking the right people or keeping the wrong people?

Yes. The curation of culture, and the holding of standards as a leader, becomes the most important thing. Because everybody can sense the difference between what you say and what you do. Over the years, I would sometimes say some stuff, but not really mean it. And then, well, that doesn't work. That's why getting what you think, what you say, and what you do aligned is a struggle. That's not easy. It might be easy to say, but it's not an easy thing to practice.

Is there any kind of real strategic mistake or just wrong decision that you regret in retrospect?

The decision I think a lot of people talk about – and one of the most difficult decisions I made when I became CEO —was our exit of what I'll call the mobile phone as defined then. In retrospect, I think there could have been ways we could have made it work by perhaps reinventing the category of computing between PCs, tablets, and phones.

What's the best decision you've made so far?

I think the best decision is the operating model of the company. I realized I was taking over from a founder. And I couldn't run the company like a founder. We needed to run the company as a team of senior leaders who are accountable to the entire company. And even the senior leaders cannot be isolated, they need to be grounded. So we found a way to be able to work together. There used to be this characterization, this caricature of Microsoft as a bunch of silos – which I thought was unfair. We were able to debunk that by showing that we are one team working together, flexible in our own ways but very fixed on our outcome goals. And that, I think, was probably the most important thing.

Was that controversial at the beginning?

It was new. We didn't grow up with that. When I look back, Bill and Steve had many management teams that came and went. They were the constants, and they maintained consistency in their heads, and they could manage the business — but they needed great players at all times. They didn't need a team. Whereas, we needed to evolve to become a management team.

Now, the Activision Blizzard deal has finally been approved. Why is that so important? 65 billion is quite a price.

It is, but we're really excited about it. For us, there are a few things that go all the way back for us as a company. Gaming is one, right? When I think about Microsoft, I think of perhaps developer tools, proprietary software, and gaming. Those are three things that we've done from the very beginning. And so, to us, gaming is the one place where we think we have a real contribution to make in consumer markets. If I look at it, the amount of time people allocate to gaming is going up and Gen Z is going to do more of that. The way games are made, the way the games are delivered, is changing radically. Whether it's mobile, or consoles, or PCs, or even the cloud. So, we're looking forward to really doubling down both as a game producer and a publisher. Now we'll be one of the largest game publishers and also as a company that's building platforms for it.

Where do you see the biggest potential for Microsoft?

For me, the biggest opportunity we have is AI. Just like the cloud transformed every software category, we think AI is one such transformational shift. Whether it's in search or our Office software. How I create documents and spreadsheets or consume information is fundamentally changing. Therefore, this notion of Copilots that we're introducing is really going to be revolutionary in terms of driving productivity and communication. It's the same thing with software development. And the fact that human work, whether it's on the frontline or knowledge, can be augmented by AI Copilot is basically going to be the biggest shift.

We will come back to AI later, but where do you see the biggest risk of disruption for Microsoft? What are you most afraid of?

I'm always looking for two things. In tech, there's no such thing as franchise value. And having lived through four of these big shifts — first, the ascendancy of the PC and Windows, then obviously what happened with web, then mobile and cloud, and now AI — I am very mindful of the tech shift that fundamentally changes all categories. If you don't adapt to the new technological paradigm, then you could lose it all. The technical shift is one aspect. The other is the business model, and you can't really expect the business model of the future to be exactly the same as the business model of the past. Cloud was a great example. We had a fantastic business model with servers, and cloud was completely disruptive. That I think is the thing to watch out for.

Is open source an existential risk for Microsoft?

We thought so in the early days. And it was. In some sense, open source was the biggest governor, or rate limiter on what happens in proprietary software. I grew up in fact, doing a lot of interrupts of Windows and Windows NT on our backend servers with Unix and Linux. And I then realized that the more we interoperate with open source, the better it is for our business.

So, are you saying we should embrace it? Has that always been your spirit? That, if you see a threat or a danger or a challenge, then you tend to embrace it instead of fighting it?

Yeah, I take the non-zero-sum way of approaching things. So, whenever I face challenges, I'm very conscious that, in business, sometimes we all go to everything being zero-sum, and in reality, there are very few things that are zero-sum. One of the things I have learned, at least as a business strategist, is to be very crisp about what those zero-sum battles are, and then to really look for non-zero-sum constructs everywhere. And so open source is one such thing. In fact, we are the largest contributor to open source today. And obviously, GitHub is the home for open source.

Jeff Bezos got famous with the phrase, "One day Amazon will go bankrupt." So far in business history, all companies went bankrupt sooner or later. Is that also true for Microsoft, or is Microsoft too big to fail?

No, there's no God-given right that businesses should just keep going. They should only keep going if they're serving some social purpose, for example, producing something that is innovative and interesting. Corporations should be grounded in whether they are doing anything relevant for the world or not. Longevity is not a goal.

How long do you want to remain CEO? And what are the goals that you have set for yourself?

Right now, I'm in the middle of so many things that I'm not able to think about what will come next. I'm close to being a CEO for ten years and a big believer in the idea that the institutional strength you build is only evident after you're gone. When I look at what Steve left, it was a very strong company that I could pick up and do what I needed to do with. And so, if my successor is very successful, then that would indicate that I have done something useful. I'm mindful of how to build that institutional strength to reinvent oneself. And that's the trickiest part. It's not about continuing some tradition, but more about being able to have the strength to reinvent yourself.

Would it be fair to say that, as soon as you realize you're unable to reinvent yourself, you should retire?

That's a good point. Yes, I think everyone reaches a point where it's time to have things refreshed.

Do you have potential successors in the company?

Absolutely. I think there's lots of people out there and I'm confident the board will do their job when the time comes. We've been very conscious of the fact that it's not about me, not about just one person and one culture or personality. It's about a company with many leaders, who all are very, very capable.

For you as a CEO, but also for the time after this role, what could and should you give back to society?

Both my wife and I, having lived through the experience with our son, are very thankful for all the institutions and people and the community that supported us through some very trying times. And so, one of the things that we're very, very passionate about, is what we can do for families with special-needs children. We work very extensively with the Children's Hospital in Seattle and many of the other institutions that were very supportive. Being a working-class parent with a special-needs kid makes it impossible for you to be able to hold down your job and take care of your child. That's one of the hardest challenges, I think. Another area is first-generation education, because education is the best tool for social mobility. At the university I went to in the United States, the University of Wisconsin in Milwaukee, we're very focused on helping first-generation kids get STEM degrees so they can have better job opportunities.

Let's talk about business ethics. Milton Friedman once famously said: "the business of business is business." I think current conflicts show this is not true, or at least not true any longer. What learnings can we draw from that?

There are two things you brought up, both of which I think are worth talking about. One is, is the business of business just business? I actually do believe so. The reason I say that, fundamentally, is because sometimes business leaders can get very, very confused. The social purpose of a company is to be able to create useful products that generate profits for their shareholders. And so, in fact, Milton Friedman, if you remember, his entire rationale came because a lot of CEOs and corporations were going haywire, and not being disciplined in how they allocated capital and performed for their shareholders.

That being said, we live in the real world, which is kind of your point. Therefore, you cannot, as a business leader, somehow think that the job of business is just business and ignore the geopolitics of the world. In the long run, it will come back to bite you. If you don't have a supply chain that works or that is broken because of geopolitics, then that is your business. So therefore, regarding your point, the way I look at it, even your book says, hey, as a business leader, you need to be grounded on which countries you can do business in. There might be places where you cannot do business because there's a conflict of values between countries.

We cannot tolerate double standards. On the one hand, tightening our ESG criteria every day, while at the same time moving large parts of our business to countries where homosexuality is illegal or where a woman can be stoned to death for adultery. It just doesn't go together. We have to find a middle ground.

That's right. And it's an interesting question: What is our role as a multinational corporation? And do we have a set of values that are universal? And how do they deal with cultural differences? And how do you not try to supersede the democratic process? The way I approach it as a multinational company, or rather an American company, is that we have to take the core values of the United States, and yet meet the world where it is. You have to understand that there are going to be massive cultural differences. You have a set of values that you live by, but you, as a multinational corporation, can't dictate what people in any country choose or don't choose to believe. At the same time, you can still live your values as a company, and I think that's an important distinction.

What is your prediction concerning our business relationship with China, the second biggest economy in the world, ten years from now? Is there going to be a unilateral decoupling by the US from China? Is it going to be a bigger alliance of solidarity between democracies in derisking from China? Or is it more likely we will continue to do business as usual with China, and everything is going to be fine?

I wish I could predict that future, but that is a question for the world. There's a part of me that says, "God, what's up?" Today, decoupling clearly seems to be the conventional wisdom.

Almost one of the very few non-partisan topics in the US.

Exactly. Whether in DC or Beijing, it seems that both sides have voted, and decoupling seems to be the only option. I don't think we have even a political theory for it, where we have two very different powers, one a Western Power, one an Eastern power; one an authoritarian communist state, and the other a democracy, with two completely different political economies.

Will we see a duopoly of sorts, with two AI world powers competing against each other in a new AI arms race? Or do you think it is imaginable that we will one day have a kind of unilateral AI governance and infrastructure?

Well, I do think some level of global governance will be required. The way I look at it, a little bit of competition is what will be there. But if there is going to be a successful, let's call it a "regime of control" over AI, then we will need some global cooperation like the IAEA. You know, what we've done in the atomic sphere might be the moral equivalent in AI where China also needs to be at the table.

But why would China respect and accept a different set of rules or a governance that is also based on ethical limits that may not apply in any way to Chinese standards?

That's kind of the issue. At the end of the day, China ultimately needs to decide what its long-term interests are. And whether they are really that different from the long-term interests of the rest of the world.

So, we have to bring them to the table?

We have to. We have real world concerns today. In the United States and in Europe, we're talking about really practical issues like bias, disinformation, or the economy and jobs. These are all things we will have to tackle in a real-world way, so that AI is actually helpful, not harmful. Those are the things we are dealing with today. And then in the long run, there is existential risk of a runaway AI. China should care about that. Even if it thinks about the first real-world issues in a different way, because of their political system, it should care about the runaway AI problem, too. So, I think figuring out at least some common ground on governance is going to be helpful.

Elon Musk said he's potentially more afraid about AI than about nukes. Do you agree?

The thing is, you can easily get to a point where you start thinking about self-improving, self-replicating software as something that you completely lose control over. And that, at least, is an area where you can start getting some scary thoughts. My own feeling is that, at the end of the day there are ways we can approach this, where control is built in. Look at automobiles. You could say that automobiles could be God-like. They could be all over the place, running people over and creating accidents. And yet, we have come up with lots of rules and regulations and safety standards. There are a lot of automotive deaths, but we've been able to use this technology very effectively. So, my hope is that we'll do the same with AI, instead of just thinking of it as an existential risk.

Isn't one of the key factors here the degree of competition? As long as we have a variety of players, the likelihood of it going out of control is way smaller than if we only have one or two entities.

You can argue it both ways, can't you? If there are a few players, you can control them. But with many players, it's harder to control. At the end of the day, the only mechanism we have is governments. We can talk about lots of things, but in our system, it's about the G7 having a set of standards. That's why I think the US and Europe have a massive role to play.

I heard the American regulator, Lina Khan of the FTC, say we need a transatlantic solution. Would you agree?

Yes, 100%. I believe that the more we can get to a place in AI where there are global standards, the better. And for global standards to work, the US and Europe have to come together.

I would like to touch on a topic from the perspective of a publisher, or rather of all creative industries. It's very important for those who create intellectual property, that they continue to have an incentive to work. So, there has to be a business model behind it. Do you have any idea whether and how the big AI players could help these businesses to keep a business model and be rewarded when their IP is used?

That's right. At the core of it, the value exchange here has to be more beneficial to the publishers. We know that, with the current state of the internet, there are a few aggregation points where most of the economic rent is collected. And only very few breadcrumbs are thrown to the others in economic terms. I feel that, if there's more competition at any aggregation point, whether it's just social media, or other sources of traffic, the people who benefit directly will be the publishers. The more LLMs there are out there being used by people as aggregation points, the more these LLMs can drive traffic back. And that is actually a good thing for publishers.

But do you have any idea how it could be done in concrete terms?

I think that there are two things here: one is that, in a large language model like BingChat, for example, we have at least tried to make sure that everything that is a response is actually linked. Our goal is to first contract with our publishers and find out if we can drive traffic back through citations and links. Second is to think about the revenue share of any advertising revenue with the publishers. I feel that that's our starting point, and a lot of what we are doing together rests on that. There are some complications going forward. After all, with synthetic data training, I think that the incentive is that we create more synthetic data. And if you're training on synthetic data, where you don't have stable attribution to likeness, that becomes a hard thing. So, there is some technological disruption we will have to be mindful of. The fact is, that no publisher will allow you to crawl their content if there isn't a value exchange, and the value exchange has to come in two forms. One is traffic, and the other is revenue share.

One of the biggest coups you landed was definitely the investment in Open AI. Could you give us a little background on how this came about and how your conversations with Sam Altman went?

I've known Sam for a long time. I knew him when he had his first startup, and I kept in touch with him over the years. We started working with Sam in 2016 as one of the first cloud providers that gave them a lot of credits. In 2018 or 2019, Sam came to us and said they needed more investment in terms of compute.

And wasn't it a non-profit project at that time as well?

Correct. I think, fundamentally, they're still a non-profit, but they needed to create a for-profit entity that could then essentially fund the compute. And we were really willing to take the bet early on what was then essentially a non-profit entity. The fact that we were able to build and get Copilot and ChatGPT up and running made me very confident that there's something different about this generation of AI and what it's doing. Its application in GitHub made me even more confident that you can build something really useful, and not just have a technological breakthrough. So, we went all in.

Many years ago, you told me about your motivation to create competition with Google in search. You once said, "I want to make Google dance." Is Google dancing yet?

No. I think, when you have three percent share of global search, and you're competing with somebody who has 97 percent, even a small gain here and there is an exciting moment. But Google is a very strong company, and they are going to come out strong. Bard is a very competitive product already. And they have a new model with Gemini. Google has a number of structural advantages right there: they already have the share, they control Android, they control Chrome. I always say that Google makes more money on Windows than all of Microsoft. It keeps us grounded.

But Google has to disrupt itself. Basically, generative AI chatbots have the potential to replace search as we know it. At least, I use it like that. In the past, whenever I had a question, I used to put it in Google. Now I put it into Bing Chat or Bard. It creates a different user behavior, which could eventually lead to a fundamental change in user habits. Don't you agree?

That's right. I think you're fundamentally speaking about changes in user habits. But that's proving to be a lot harder than you might think, because the strength of Google is the default nature of the Google search. It's so ingrained as a search habit, and change is moving slowly. But having said that, you made a good point that a lot of us today are now going to Bing Chat or to ChatGPT or Bard to have our questions answered. I think things are changing, but it's a slow-moving transition. Google can make that transition, and they're very much committed to making it. And you're right in pointing out that their business model will fundamentally have to change. So, it's a little bit like what happened to us with Windows. We had a fantastic business model until it wasn't anymore. Therefore, I think Google will be facing more challenges, and will have to fundamentally rethink its business model in the long run. But they definitely have a head start, because they already have all the users.

But things are developing so fast. I don't know if you sometimes use Any Call. It's such a nice feature where you can just give a voice command or ask a question, and you immediately get answers even to very sophisticated questions. Nobody knows how user habits will change. Do you have a vision? What is your most radical, your most disruptive vision for the future of our habits?

Take Copilot for example: The way we are building it is to say, I want to use it to plan my vacation or do some shopping or write some code or prepare for a meeting. It's kind of like a personal agent to me. It's kind of like the original PC. What was the PC about? It was about empowering you to be able to do things. Now, Copilot is in many ways like a PC, except that one can just go to this personal agent and say, Write me a document. I'm meeting Mathias, please prepare me for that meeting with all the summarization of all my mails from our partnership, and it comes back with all that. It's kind of like asking an assistant, a smart analyst, about all the things you want.

What I find so fascinating, is that we humans are now trying to deal with this disruptive change, which may be more important than the invention of the internet or the invention of the automobile, by defining the limits of what AI can or cannot do. And I am constantly discovering that I was wrong. A couple of months ago, I thought AI wouldn't be able to have or create emotions. But AI does it perfectly well, perhaps even better than human beings, who are not as good at faking emotions sometimes. Or take creativity or humor, we always say AI cannot do that. It's not true. Do you see any limits today? Or do you think we should define some?

The way I see it, as someone in the business of creating more powerful and capable AI, is that I wouldn't bet against it. My question is rather, what will humans do with more capable AI?

You mean, would Picasso be better with AI or without it?

Yes, exactly. If we have a new tool, what will we do with it? What did we do with cars? What did we do with planes? What can we do with computers today? We've done a lot of interesting things. I am betting on human ingenuity to do things with this new tool. One of the most exciting things for me is that, if you think about it, eight billion people can have a doctor, a management consultant, a tutor, and more in their pocket. It's a true democratization of knowledge.

The British AI expert Mustafa Suleyman is convinced that AI is particularly strong in this emotional context of things. Would you agree with him?

I think he's right in some sense. We've been working with Epic software, which is one of the largest healthcare ISVs in the United States that everybody uses. A significant portion of the US healthcare system uses their medical record system. And they were telling me about how some of the doctors are finding that the drafts that AI is creating for them are very empathetic. Human beings are sometimes inconsistent in how we express ourselves, whereas the AI can be more consistent. For example, when writing a job description, it can make sure that the job description invites people of all genders and persuasions to apply and not have bias. So, I think AI can actually be very helpful in many ways to be a little more empathetic and more understanding of the world.

I recently interviewed a virtual version of Axel Springer, the founder and namesake of our company. I asked him really tough questions about culture and soft-factor issues, and found his answers more convincing than my own, I have to say. Do you see any reason or danger that humans will end up serving machines instead of machines serving humans?

That is the question.

That is the question.

The question is, how do you stay in control? And, at the end of the day, how can we ensure that AI does not manipulate human beings, even in this cognizant context of empathy. It cannot use its soft skills to manipulate humans. So I think there will be this level of thinking about software and its verification. About how it will be deployed, how will it be monitored, what adverse activities will be happening. These are some of the frontiers, what is considered the alignment challenge. Another challenge is understanding how AI makes its own decisions inside the box.

Is it thinking consciously?

That's the question. We don't even fully understand what consciousness is. You can see it from a neuroscience perspective, but can we mathematically or mechanistically understand how LLMs do their predictions, for example? It's a frontier of science where there is still work being done. You have to be very, very conscious of how you deploy what we have today in ways that human agency, and humanity, is in the loop. Human agency and judgment are, in fact, very much part of the loop. And we must not lose control.

If AI has such strong intellectual ability (IQ) and emotional intelligence (EQ), and is even good at creativity, humor, self-improvement, and accelerating progress; what areas of intelligence are left for humans? And what advice would you give mankind concerning what to focus on in the next decades?

Well, look at this new example of the inverted classroom, where people are saying, "Look, we know you're going to use one of the LLMs, like Bing Chat or ChatGPT, for your assignments. What we want to know are the prompts. We'll give you the answer, now tell us the prompts." That's critical thinking. Because you learn so much more. I think that critical thinking, good judgment, how to think in a broad way, and not being afraid to learn, could be the new benefits. I sometimes say to myself, "My God, I wish I understood more biology." And now I can ask dumb questions and have things explained at my pace. Our ability to consume more knowledge and to grow our own knowledge base can improve. I think there'll be lots of interesting implications from having this powerful tool.

Exactly. You wrote a book, "Hit Refresh" in 2017, and you defined a couple of rules there for good leadership. One was that you should take on a challenge even though you are afraid of it. Does that mean that being afraid or feeling fear is healthy?

Absolutely. In some sense, being uncomfortable, for example, about the situation you are in, the situation the world is in, the situation the company is in, makes you question how to get to a better place. Sometimes, getting rid of your constraints, or those of others, can help you conceive some new approach, some new technology or new input. I think that's our job, isn't it? Leadership is about leaning into some of the fear and discomfort.

Would you even go a step further and say that a person who does not experience fear cannot be courageous?

I've never thought about that, but I think that's a great way to describe it. You need courage, and you need it every day. It's courage that will help you overcome your fears.

Disclosure: Axel Springer is Insider's parent company.

Read the original article on Business Insider